Google Lens, the company’s image recognition technology, is launching a new “multi-search” function today that allows the user to search using text and photos at the same time. Last September, Google previewed the feature at its Search On event, saying it will be available in the coming weeks after testing and assessment. In the United States, the new multi-search functionality is now available as a beta feature in English.

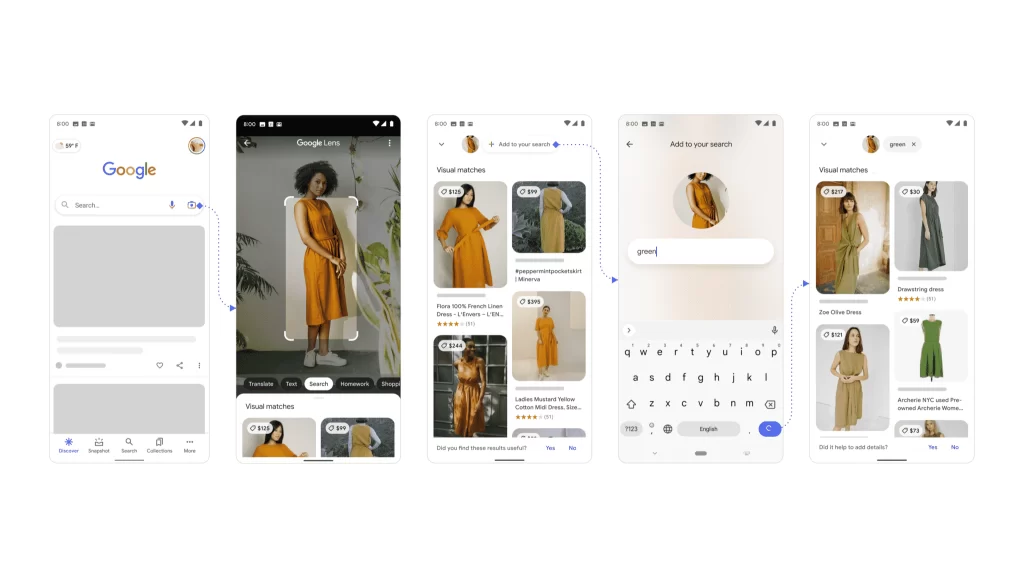

To begin, open the Google app on Android or iOS, touch the Lens camera button, then either search for or take a photo of one of your screenshots. You may then add text by swiping up and tapping the “+ Add to your search” button. Users should have the most recent versions of the app to make use of the new features, according to Google.

Google’s New Feature Will Let Users Search For Things Using Words And Images

You may now ask a question about an object in front of you or narrow your search results by color, brand, or visual qualities with the new multi-search tool. According to TechCrunch, the new function now provides the top results for shopping queries, with more use cases on the way.

You can do things other than shop with this initial test launch, but it won’t be great for every search. This is how the new feature would operate in practice. Let’s say you find a dress you like but don’t like the color it comes in. You might look at a photo of the outfit and then search for it using the word “pink” in your search query to find it in the color you want.

The new feature might be especially beneficial for the types of queries that Google is presently having difficulties understanding, such as those where you’re seeking something visual that’s difficult to convey with words alone. Google may have a higher chance of producing relevant search results by merging the image and the text into one query.